AI-Assisted Research

Using AI to do more research, not less thinking.

The Challenge

An hour-long interview produces roughly 10,000 words. A study with eight participants produces 80,000. The bottleneck in qualitative research has rarely been the data itself. It is the time between collecting it and arriving at findings clear enough to act on.

AI tools compress that gap. Sorting, formatting, drafting, cross-referencing: the mechanical work that used to take days now takes hours. What AI does not do is the listening. Tone, hesitation, the moment a participant's posture shifts when a question lands wrong: these are the signals that tell a researcher what a transcript cannot, and they sit with the person in the room.

The quality of the research does not come from that speed. It comes from what the researcher does with the time it frees up.

How the work is divided

AI with human oversight. The model handles volume and speed. The researcher handles meaning. Anything the model produces is raw material: reviewed, interrogated, and reinterpreted by the researcher before it becomes a finding.

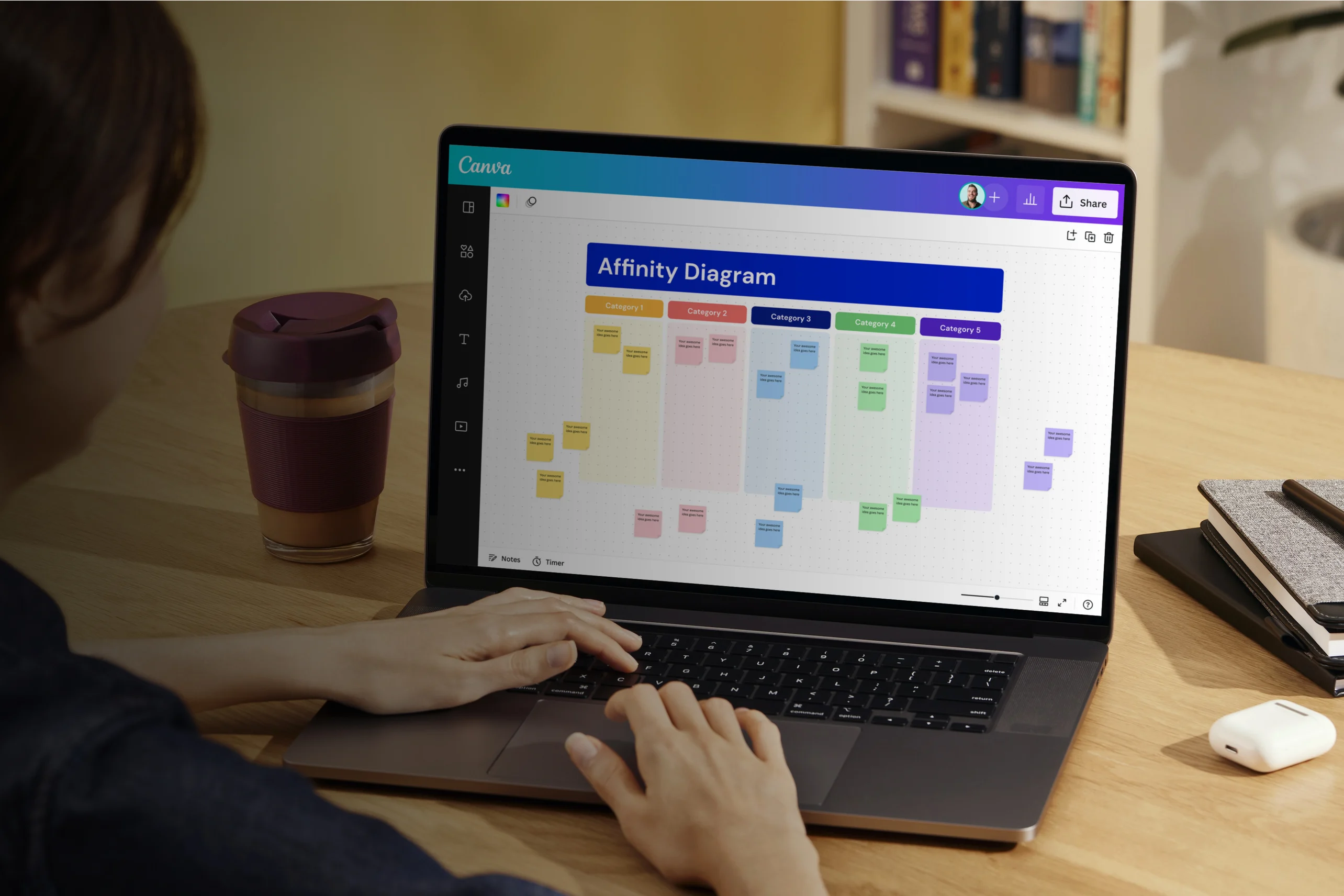

- Transcript cleanup and segmentation

- Text-level clustering at scale

- Screener and discussion guide drafts

- Preliminary code suggestions

- Cross-referencing across sessions

- Report formatting and documentation

- Interview rapport and participant trust

- Reading tone, silence, and body language

- Sitting with ambiguity and contradiction

- Methodological judgment at every step

- Surfacing insights and framing findings

- Review of AI outputs and stakeholder communication

The researcher pilots the study, reads what transcripts cannot show, and owns every finding.

Prompts are research instruments

Writing a good prompt works a lot like writing a good interview question. Be specific about what you need, leave room for what you didn't expect, and know in advance what a bad answer looks like. The same habits that make you careful with research questions make you careful with prompt engineering. The output is scaffolding for the researcher's thinking, not a shortcut around it.

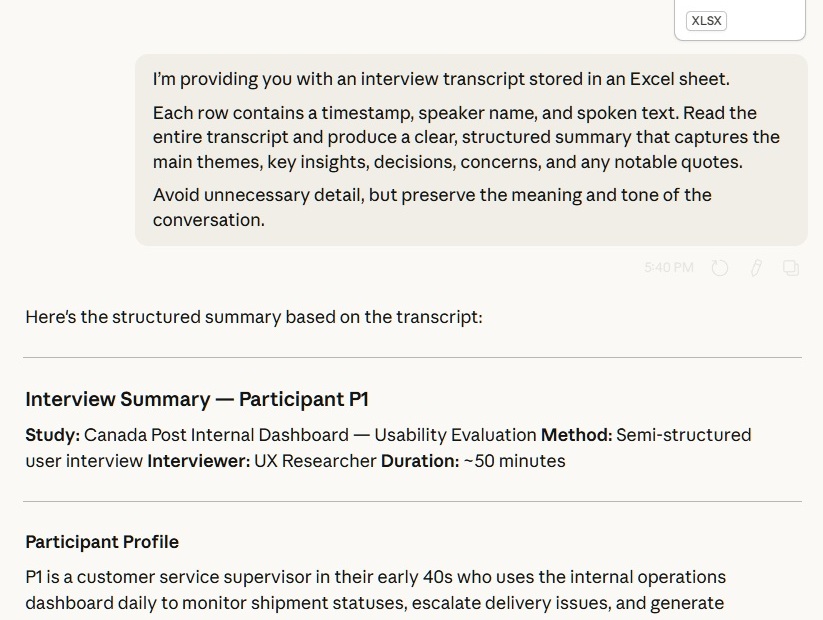

Input specification

Tells the model what the data is and how it's structured before it starts reading.

Structured extraction

Names the five categories the summary should capture: themes, insights, decisions, concerns, and quotes. The model organises what was said in one session. Cross-session synthesis, meaning what actually matters across participants, stays with the researcher.

Fidelity constraint

Trims verbosity while guarding meaning and tone. The model is told to be concise, but not at the cost of the conversational texture that often carries signal in qualitative data.

A note on confidentiality

To protect confidential business information, Claude.ai was asked to generate a fictional interview summary. The example above reflects the kind of outcomes my research typically produces, not real data and should not be cited as factual.

From insight to action

Getting findings into a room is only half the job. Getting them to stick is the other half. The way research is framed, sequenced, and delivered shapes whether it becomes a decision or a document nobody reads again.

Stakeholders don't act on data. They act on clarity. The researcher's job is to translate one into the other, and to know which parts of the evidence need context and which just need to be said plainly.

What good research looks like

It's not always the most thorough study or the longest report. It's the research that arrives at the right moment, framed in a way the team can use, and done honestly enough that people trust the conclusions even when they're uncomfortable.

AI doesn't get you there. It clears the runway so the researcher has the time, focus, and clarity to get there themselves. The most useful thing AI does in research isn't surface insights. It buys back time to develop insights to a higher standard.

That's what I aim for. If you're working on something where the user experience matters and the decisions are genuinely hard, I'd be glad to be part of the conversation.

Let's connect

Have a research challenge?

Whether you have a project in mind or just want to talk through an idea, I'd like to hear from you.